What is CUDA is a question that is becoming increasingly common as artificial intelligence, data science, and high-performance computing continue to dominate modern technology. CUDA, which stands for Compute Unified Device Architecture, is a computing platform created by NVIDIA that allows developers to use graphics processing units (GPUs) for general-purpose computing beyond traditional graphics tasks.

Today, CUDA plays a central role in the global AI ecosystem. The platform continues to evolve through ongoing updates to the CUDA Toolkit, with the latest versions expanding GPU performance, programming tools, and compatibility across cloud platforms, supercomputers, and AI infrastructure.

From powering advanced neural networks to accelerating scientific research, CUDA has become one of the most influential technologies behind modern computing.

Understanding CUDA: A Simple Explanation

CUDA is a parallel computing platform and programming model developed by NVIDIA that enables developers to harness the massive processing power of GPUs for complex calculations.

Traditionally, GPUs were designed to render graphics for video games and visual applications. However, GPUs contain thousands of small processing cores capable of performing calculations simultaneously. CUDA allows programmers to tap into that parallel processing power.

Instead of relying solely on a computer’s CPU, developers can write code that runs directly on a GPU.

Key facts about CUDA include:

- Introduced by NVIDIA in the mid-2000s

- Enables general-purpose computing on GPUs (GPGPU)

- Works with programming languages such as C, C++, Python, and Fortran

- Widely used in AI, machine learning, data science, and simulations

This innovation transformed graphics cards into powerful computing accelerators capable of processing enormous datasets in parallel.

The History Behind CUDA

CUDA emerged from early research into GPU computing.

During the early 2000s, researchers explored ways to use graphics processors for scientific calculations. GPUs contained hundreds of cores even at that time, making them suitable for parallel workloads.

NVIDIA introduced CUDA to simplify GPU programming and make it accessible to developers.

Important milestones include:

| Year | Milestone |

|---|---|

| Early 2000s | Experiments begin using GPUs for computing |

| 2006 | NVIDIA introduces the CUDA architecture |

| 2007 | First CUDA-enabled GPUs become available |

| 2010s | CUDA becomes central to AI and deep learning |

CUDA allowed programmers to write code that directly accesses GPU processing power, eliminating many of the complexities that previously made GPU programming difficult.

How CUDA Works

CUDA works by dividing large computational problems into thousands of smaller tasks that GPUs can process simultaneously.

Instead of sequential processing, CUDA uses parallel processing.

A typical CUDA workflow looks like this:

- A developer writes a program using CUDA libraries.

- The CPU sends tasks to the GPU.

- The GPU executes thousands of small threads simultaneously.

- Results return to the CPU.

This architecture dramatically improves performance for workloads involving large datasets.

For example:

| Task | CPU Processing | CUDA GPU Processing |

|---|---|---|

| Image recognition | Sequential operations | Parallel pixel analysis |

| AI training | Slower matrix calculations | Massive parallel matrix operations |

| Scientific simulation | Limited CPU cores | Thousands of GPU cores |

Because GPUs can execute thousands of threads at the same time, CUDA can deliver performance improvements that far exceed traditional CPU computing.

The CUDA Toolkit: What Developers Use

CUDA is part of a broader development ecosystem called the CUDA Toolkit.

The toolkit provides the tools developers need to build GPU-accelerated applications.

Key components include:

- CUDA Compiler (NVCC) – Converts CUDA code into GPU instructions

- GPU libraries – Prebuilt libraries for AI, mathematics, and data processing

- Debugging tools – Tools for performance analysis

- Runtime environment – Executes GPU programs

Modern versions of the CUDA Toolkit include improvements to compilers, GPU memory management, and support for newer programming frameworks.

These updates allow developers to build faster and more efficient GPU-accelerated applications across many computing environments.

Why CUDA Is Important for Artificial Intelligence

CUDA became especially important during the rise of artificial intelligence and machine learning.

AI models depend heavily on mathematical operations such as matrix multiplications and tensor calculations. GPUs are highly efficient at performing these tasks because they can process thousands of operations in parallel.

CUDA provides the programming interface that allows AI frameworks to use GPU acceleration.

Major AI frameworks that rely on CUDA include:

- TensorFlow

- PyTorch

- JAX

- RAPIDS

- CUDA-accelerated AI libraries

Without GPU acceleration through CUDA, training advanced neural networks would take significantly longer and require far more computing resources.

Large AI models often rely on clusters of CUDA-enabled GPUs working together in data centers.

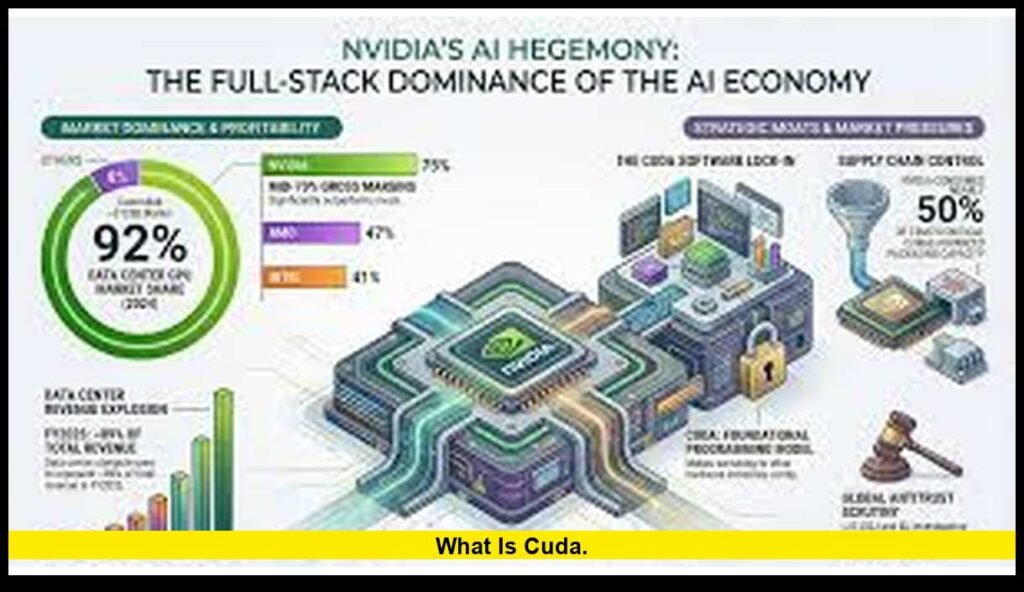

CUDA and NVIDIA’s Rise in the AI Industry

CUDA has played a major role in NVIDIA’s transformation from a graphics hardware company into a leader in artificial intelligence computing.

Originally known for gaming graphics cards, NVIDIA expanded its focus toward AI infrastructure, data center GPUs, and high-performance computing systems.

CUDA provides the software ecosystem that allows developers to fully utilize NVIDIA GPUs.

This combination of hardware and software has helped drive widespread adoption of GPU computing across industries.

Today, many of the world’s largest AI systems rely on CUDA-accelerated GPUs for training and inference.

Where CUDA Is Used Today

CUDA is widely used across many industries.

Artificial Intelligence

Training neural networks and running machine learning algorithms.

Scientific Research

Running simulations in physics, chemistry, and climate science.

Healthcare

Analyzing medical images and performing genomic research.

Autonomous Vehicles

Processing sensor data and training self-driving systems.

Financial Modeling

Running risk analysis and complex financial simulations.

Gaming and Graphics

Supporting advanced rendering techniques and visual effects.

The ability to process massive datasets quickly makes CUDA valuable wherever high-performance computing is required.

CUDA in Supercomputers and Data Centers

Many of the world’s fastest supercomputers rely heavily on GPU acceleration.

CUDA plays an essential role in these systems by allowing developers to write applications that can run efficiently on thousands of GPUs.

Examples of CUDA-powered computing environments include:

- AI research clusters

- national laboratory supercomputers

- weather prediction models

- molecular simulations

Modern data centers increasingly depend on GPU acceleration for artificial intelligence workloads and large-scale data analytics.

CUDA enables applications to scale from a single GPU to thousands of GPUs in cloud infrastructure.

CUDA and GPU Hardware

CUDA works specifically with NVIDIA GPUs. This tight integration between software and hardware helps optimize performance.

Each NVIDIA GPU contains CUDA cores, which are small processing units designed for parallel computation.

For example:

| GPU Type | Approximate CUDA Cores |

|---|---|

| Consumer GPUs | Thousands |

| Data center GPUs | Tens of thousands |

| AI accelerators | Optimized for tensor operations |

More CUDA cores generally allow the GPU to handle more parallel operations at once.

This architecture allows GPUs to process extremely large computational workloads efficiently.

Recent Developments in CUDA

CUDA continues to evolve as computing needs grow.

Recent updates to the CUDA ecosystem focus on improving developer tools, expanding support for modern programming environments, and optimizing GPU performance for artificial intelligence workloads.

Improvements have also focused on:

- enhanced GPU memory handling

- improved compiler performance

- expanded compatibility with new hardware

- better integration with AI development frameworks

These improvements help developers build faster and more scalable applications.

CUDA vs CPU Computing

Understanding the difference between CPU and GPU computing helps explain why CUDA is so powerful.

| Feature | CPU | CUDA GPU |

|---|---|---|

| Core count | Few cores | Thousands of cores |

| Best tasks | Sequential workloads | Parallel workloads |

| Examples | Operating systems, apps | AI training, simulations |

| Processing style | Serial | Parallel |

CPUs and GPUs work together in modern computing systems.

The CPU manages system operations and task coordination, while CUDA-enabled GPUs handle parallel calculations.

The Future of CUDA

CUDA is expected to remain a central technology in AI and high-performance computing.

As artificial intelligence models grow larger and datasets expand, demand for GPU acceleration continues to increase.

Future CUDA development will likely focus on:

- improving AI training efficiency

- accelerating inference workloads

- deeper integration with cloud platforms

- optimizing GPU performance for new computing architectures

Because CUDA has a large and mature developer ecosystem, it remains one of the most important platforms in modern computing.

Final Thoughts

CUDA transformed graphics processors into powerful engines for scientific computing, artificial intelligence, and large-scale data processing.

From its early development in the mid-2000s to its role in today’s AI infrastructure, CUDA has reshaped how developers use GPUs. It now powers everything from research laboratories to global cloud computing systems.

Understanding what is CUDA helps explain the technology behind many of the most advanced innovations in modern computing.

What are your thoughts on CUDA and GPU computing? Share your perspective in the comments and stay tuned for more technology insights.